The scent of turpentine and the texture of wet clay are giving way to the hum of servers and the glow of LED screens. In studios once dominated by easels and kilns, a quiet revolution is underway, one where algorithms are the new brushes and data streams the new pigments. The convergence of art and technology, long a subject of fascination, has accelerated into a dominant trend, fundamentally reshaping the creative process. At the forefront of this movement are two seemingly disparate technologies: Artificial Intelligence Generative Content (AIGC) and advanced radar sensors. These tools are not merely novel gadgets; they are becoming integral partners for a new generation of artists, enabling them to perceive, interpret, and express reality in ways previously confined to science fiction.

The rise of AIGC marks a paradigm shift in artistic creation. For centuries, the artist's hand, guided by their unique vision and skill, was the sole author of a work. Today, artists are increasingly acting as curators, conductors, and collaborators with intelligent systems. Tools like Midjourney, Stable Diffusion, and DALL-E are not about replacing the artist but expanding their palette into the vast, uncharted territory of machine imagination. Artists like Refik Anadol have gained international acclaim by training AI models on massive datasets—from architectural blueprints to meteorological data—to generate breathtaking, fluid visualizations that transform entire building facades into living, breathing digital canvases. The artist's role evolves from crafting every pixel to designing the framework, the "creative DNA," that guides the AI. They set the parameters, choose the training data, and then engage in a dynamic dialogue with the machine, refining and iterating on the surprising, often uncanny, outputs it generates. This process blurs the lines between creator and tool, resulting in artworks that are a true synthesis of human intent and computational creativity.

Beyond the screen, a more physical and interactive form of tech-art is emerging, powered by radar sensors. While commonly associated with aviation and speed detection, radar technology has been miniaturized and refined to become an exquisitely sensitive tool for capturing movement. Unlike cameras, which capture a 2D image and raise significant privacy concerns, radar sensors perceive the world in three dimensions through radio waves, detecting the subtlest gestures, the rhythm of a heartbeat, or the presence of a person behind a wall. This capability has opened up new frontiers in interactive installation art. Artists are using radar to create environments that respond not just to overt actions, but to the faint, almost imperceptible energy of human presence. An installation might change its soundscape based on the collective breathing rate of its audience, or a visual projection might warp and flow in response to a viewer's hesitant approach. This creates a deeply intimate and empathetic connection between the artwork and the participant, an interaction based on presence and essence rather than a posed performance for a lens.

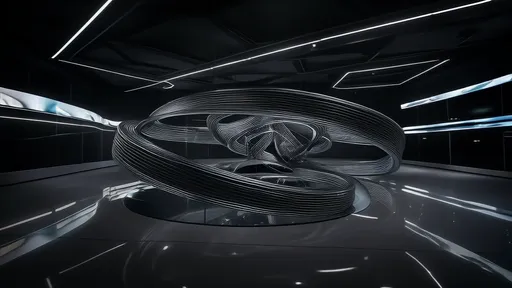

The most profound developments occur at the intersection of these two powerful tools. Artists are beginning to feed the rich, real-time spatial and biometric data captured by radar sensors directly into generative AI models. This creates a closed-loop system where the artwork is not pre-rendered but is born live, in response to its environment. Imagine an immersive room where radar maps the movements and proximities of visitors. This data is then processed by an AI, which generates a constantly evolving abstract visual narrative on the walls, a digital ecosystem that thrives on human interaction. The collective mood of the crowd, interpreted through their speed and grouping, could influence the color palette; a sudden, sharp movement might send a ripple effect through the generative form. This fusion of physical sensing and digital generation results in artworks that are truly alive and context-aware, offering a unique experience that can never be perfectly replicated.

This new toolkit is not without its controversies and challenges. The art world is grappling with complex questions of authorship and originality. If an AI generates the primary visual, who is the true artist—the programmer, the user who crafted the prompt, or the AI itself? The legal and philosophical frameworks for these questions are still in their infancy. Furthermore, the accessibility of these powerful tools raises concerns about the potential for homogenization of style and the devaluation of traditional technical skills. There is a risk that the distinctive "look" of a popular AI model could become a new artistic cliché. However, many artists argue that these tools, like the invention of the camera or Photoshop, will ultimately push creativity forward. The true artist will not be the one who can generate the most photorealistic image with a single prompt, but the one who can wield these tools with conceptual depth, using them to ask new questions about perception, reality, and our relationship with technology.

Ultimately, the integration of AIGC and radar sensors signifies more than just a change in technique; it represents a fundamental expansion of what art can be. Art is no longer solely a static object to be observed. It is becoming an intelligent, responsive, and generative entity. It can be a dynamic data portrait of a city, a room that breathes with its inhabitants, or a collaborative performance between humans and algorithms. These technologies are providing artists with a new language—a language of data, interaction, and emergence. As artists continue to explore this uncharted territory, they are not just adopting new tools; they are helping to define the future of human expression in an increasingly technological world, proving that the heart of art remains human, even when its hands are digital.

By /Sep 26, 2025

By /Sep 26, 2025

By /Sep 25, 2025

By /Sep 25, 2025

By /Sep 26, 2025

By /Sep 26, 2025

By /Sep 25, 2025

By /Sep 26, 2025

By /Sep 26, 2025

By /Sep 25, 2025

By /Sep 25, 2025

By /Sep 25, 2025

By /Sep 26, 2025

By /Sep 26, 2025

By /Sep 25, 2025

By /Sep 26, 2025

By /Sep 25, 2025

By /Sep 25, 2025

By /Sep 26, 2025

By /Sep 25, 2025